| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 |

Tags

- 900gle

- aggs

- MySQL

- high level client

- TensorFlow

- API

- docker

- Elasticsearch

- ELASTIC

- analyzer test

- licence delete curl

- flask

- Mac

- zip 암호화

- zip 파일 암호화

- Kafka

- plugin

- 파이썬

- query

- 차트

- matplotlib

- springboot

- token filter test

- Python

- License

- aggregation

- license delete

- Java

- sort

- Test

Archives

- Today

- Total

개발잡부

[tf] rnn project 본문

반응형

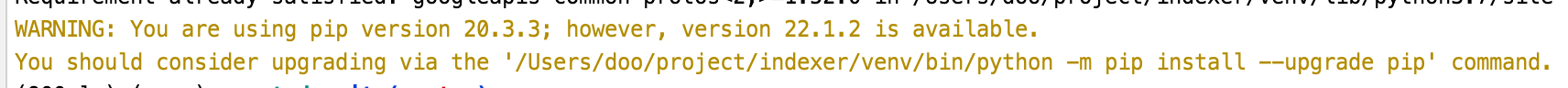

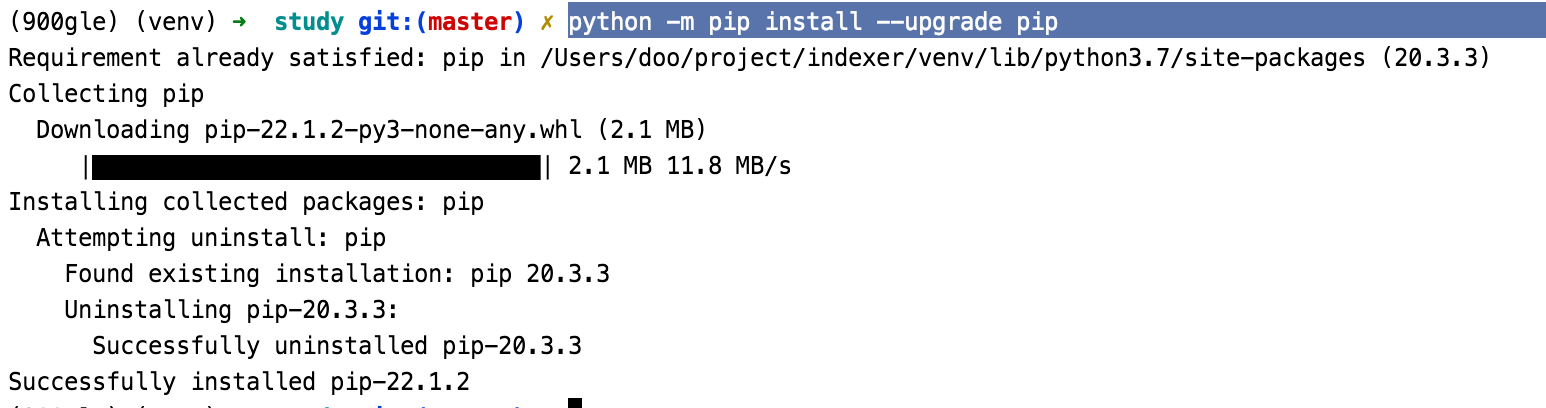

conda 환경을 새로 만들고 싶지만 시간이 아까워서 900gle 의 환경을 사용.

conda activate 900gle

python -m pip install --upgrade pip

Study.py

import numpy as np

import tensorflow_datasets as tfds

import tensorflow as tf

tfds.disable_progress_bar() "This version of TensorFlow Datasets requires TensorFlow "

ImportError: This version of TensorFlow Datasets requires TensorFlow version >= 2.1.0; Detected an installation of version 1.14.0. Please upgrade TensorFlow to proceed.

에라이 ..

가상환경 다시 만듦

conda create --name "rnn" python="3.7"

import matplotlib.pyplot as plt

import numpy as np

import tensorflow_datasets as tfds

import tensorflow as tf

tfds.disable_progress_bar()

def plot_graphs(history, metric):

plt.plot(history.history[metric])

plt.plot(history.history['val_'+metric],'')

plt.xlabel("Epochs")

plt.ylabel(metric)

plt.legend([metric, 'val_'+metric])

dataset, info = tfds.load('imdb_reviews', with_info=True, as_supervised=True)

train_dataset, test_dataset = dataset['train'], dataset['test']

for example, label in train_dataset.take(1):

print('text : ', example.numpy())

print('label : ', label.numpy())

BUFFER_SIZE = 10000

BATCH_SIZE = 64

train_dataset = train_dataset.shuffle(BUFFER_SIZE).batch(BATCH_SIZE).prefetch(tf.data.AUTOTUNE)

test_dataset = test_dataset.batch(BATCH_SIZE).prefetch(tf.data.AUTOTUNE)

for example, label in train_dataset.take(1):

print('text : ', example.numpy()[:3])

print()

print('label : ', label.numpy()[:3])

VOCAB_SIZE=1000

encoder = tf.keras.layers.experimental.preprocessing.TextVectorization(max_tokens=VOCAB_SIZE)

encoder.adapt(train_dataset.map(lambda text, label: text))

vocab = np.array(encoder.get_vocabulary())

vocab[:20]

encoded_example = encoder(example)[:3].numpy()

encoded_example

tf.keras.layers.LSTM(

units, activation='tanh', recurrent_activation='sigmoid',

use_bias=True, kernel_initializer='glorot_uniform',

recurrent_initializer='orthogonal',

bias_initializer='zeros', unit_forget_bias=True,

kernel_regularizer=None, recurrent_regularizer=None, bias_regularizer=None,

activity_regularizer=None, kernel_constraint=None, recurrent_constraint=None,

bias_constraint=None, dropout=0.0, recurrent_dropout=0.0,

return_sequences=False, return_state=False, go_backwards=False, stateful=False,

time_major=False, unroll=False, **kwargs

)

model = tf.keras.Sequential([[

encoder,

tf.keras.layers.Embedding(

input_dim=len(encoder.get_vocabulary()),

output_dim=64,

mask_zero=True

),

tf.keras.layers.LSTM(64),

tf.keras.layers.Dense(64,activation='relu'),

tf.keras.layers.Dense(1)

]])

model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True),optimizer=tf.optimizers.Adam(1e-4),

metrics=['accuracy'])

history = model.fit(train_dataset, epochs=3, validation_data=test_dataset, validation_steps=30)

test_loss, test_acc = model.evaluate(test_dataset)

print('Test Loss: {}'.format(test_loss))

print('Test Accuracy: {}'.format(test_acc))

plt.figure(figsize=(16,8))

plt.subplot(1,2,1)

plot_graphs(history, 'accuracy')

plt.ylim(None, 1)

plt.subplot(1,2,2)

plot_graphs(history, 'loss')

plt.ylim(0,None)

반응형

'Python > RNN' 카테고리의 다른 글

| [tf] 유사성 측정 (0) | 2022.08.21 |

|---|---|

| [tf] RNN을 사용한 텍스트 분류 (0) | 2022.08.17 |

| RNN (0) | 2022.01.09 |